We are excited to announce that the MI4MedFM submission portal is now officially open on OpenReview. We look forward to your innovative contributions! Submit here.

MI4MedFM | MICCAI 2026

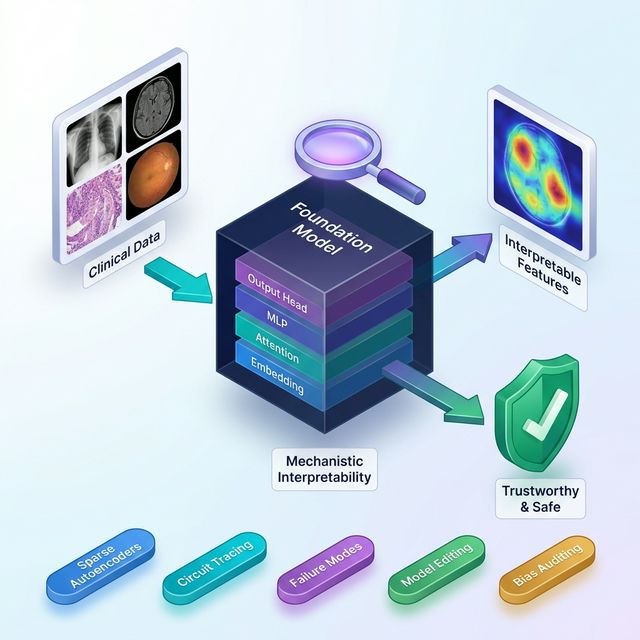

Mechanistic Interpretability for Medical Foundation Models

MI4MedFM is a workshop held in conjunction with MICCAI 2026. It focuses on understanding how medical foundation models compute, where they fail, and how mechanistic interpretability can make them safer, more robust, and more reliable for clinical deployment.

News

The official website for the MI4MedFM workshop at MICCAI 2026 is now live. Call for papers and important dates have been announced.

MI4MedFM has been officially accepted as a workshop at MICCAI 2026 in Abu Dhabi.

Why this workshop matters now

Medical foundation models are becoming central to imaging and multimodal clinical AI, but high-stakes deployment needs more than saliency maps or attention plots. MI4MedFM centers mechanistic interpretability: understanding the internal computations that generate model behavior.

The workshop creates a focused MICCAI forum for methods such as sparse autoencoders, circuit discovery, activation patching, causal tracing, steering, and weight-space analysis — while keeping the discussion tightly connected to clinical validity, robustness, and deployment safety.

Four Core Pillars of MI4MedFM

Our scientific agenda is structured around four interlocking goals that translate mechanistic interpretability into robust clinical practice.

Inspect internal model mechanisms

Understand what features, subcircuits, and latent representations medical foundation models rely on when producing clinically relevant behavior.

- Sparse autoencoders and feature discovery

- Circuit analysis and causal tracing

- Activation-level understanding of decision pathways

Interactive 3D Layer Analysis

Hover over the foundation model representation below to expand the hidden layers and inspect the mechanistic computations driving clinical predictions.

Topics of Interest

We welcome submissions across a wide range of themes bridging foundation model interpretability with safety and deployment.

Features, circuits, and internal representations

Methods that uncover what medical models internally encode and how those computations are organized.

Clinically meaningful validation

Tests that determine whether discovered mechanisms align with trusted medical concepts and downstream behavior.

Spurious cues, shortcuts, and hallucinations

Mechanistic debugging for brittle reasoning, domain shift, bias, and unreliable multimodal outputs.

Editing, steering, and control

Using mechanism-level insight to improve robustness, correct behavior, and reduce harmful failure modes.

Monitoring unsafe states in practice

Interpretability-guided monitoring and uncertainty-aware signals for safer clinical deployment settings.

Scalable, usable open infrastructure

Frameworks and workflows that make mechanistic analysis practical for medical imaging and multimodal MedFMs.

Workshop Program

A focused 2-hour program featuring a keynote, oral presentations, and an interactive poster session with networking.

An invited talk framing trustworthy medical AI through the lens of mechanism and evidence.

Selected papers presented in concise oral sessions built for cross-disciplinary accessibility.

Poster-first visibility, direct discussion, and opportunities for clinicians and ML researchers to connect.

Celebrating the best paper and best presentation awards towards the end of the event.

Opening & Framing

Setting the agenda, scientific motivation, and workshop goals for the MICCAI audience.

Keynote Session

Flagship invited talk on trustworthy medical AI, interpretability, and clinical reliability.

Oral Presentations

Curated short talks presenting methods, evaluations, and lessons for medical foundation models.

Prizes Award Ceremony

Announcement of the Best Paper and Best Presentation awards, followed by concluding remarks.

Coffee Break & Networking

Poster interaction, demos, and in-depth discussion around methods and applications.

Keynote Speaker

We are honored to feature a leading pioneer driving the future of trustworthy and transparent clinical AI.

Call for Papers

We invite original research contributions on mechanistic interpretability for medical foundation models. Submissions are welcomed across two tracks.

Important Dates (All deadlines are Anywhere on Earth — AOE)

July 15, 2026

August 5, 2026

August 18, 2026

October 2026

Workshop Awards

Proceedings Track

Original, unpublished research intended for the MICCAI satellite-events LNCS proceedings.

- Format: 8–10 pages (LNCS style) + references

- Review: Double-blind peer review

- Publication: Springer LNCS proceedings

Non-Archival Track

Extended abstracts, previously published work, or work currently under review elsewhere.

- Format: 4-page extended abstract + references

- Review: Light review for relevance and quality

- Publication: Not included in proceedings (non-archival)

Submission Guidelines

Use the official Springer LNCS LaTeX template

Double-blind with conflict-of-interest safeguards

Relevance, rigor, clarity, validation, and reproducibility

Topics include, but are not limited to:

Organizing Committee

Our international committee unites expertise in medical image analysis, foundation models, and trustworthy artificial intelligence.

Mohammad Yaqub

Associate Professor

MBZUAI

Muhammad Haris

Assistant Professor

MBZUAI

Muhammad Bilal

Professor

Birmingham City University

Dwarikanath Mahapatra

Assistant Professor

Khalifa University

Imran Razzak

Associate Professor

MBZUAI

Yutong Xie

Assistant Professor

MBZUAI

Ufaq Khan

Ph.D. Student

MBZUAI

Rishabh Lalla

Ph.D. Student

MBZUAI

Umair Nawaz

Ph.D. Student

MBZUAI

Namrah Rehman

Ph.D. Student

MBZUAI

Satyajit Kishore Tourani

Ph.D. Student

MBZUAI

Tausifa Jan Saleem

Postdoctoral Associate

MBZUAIFAQ & Contact

Common questions about the workshop and how to reach the organizing committee.

When is the workshop taking place?

The MI4MedFM workshop is part of the MICCAI 2026 satellite events in Abu Dhabi. The exact date in October is TBA.

Will proceedings be published?

Yes. Submissions accepted under Track 1 (Proceedings Track) will be published in the official Springer LNCS MICCAI 2026 Workshop Proceedings.

Can I submit previously published work?

For Track 1 (Proceedings Track), submissions must be original and unpublished. For Track 2 (Non-Archival Track), you are welcome to submit extended abstracts of recently published work or work currently under review elsewhere.

Contact Us

If you have any further questions regarding the workshop, submission guidelines, or sponsorship opportunities, please feel free to email the organizing committee.

Email Organizers mi4medfm.info@gmail.com